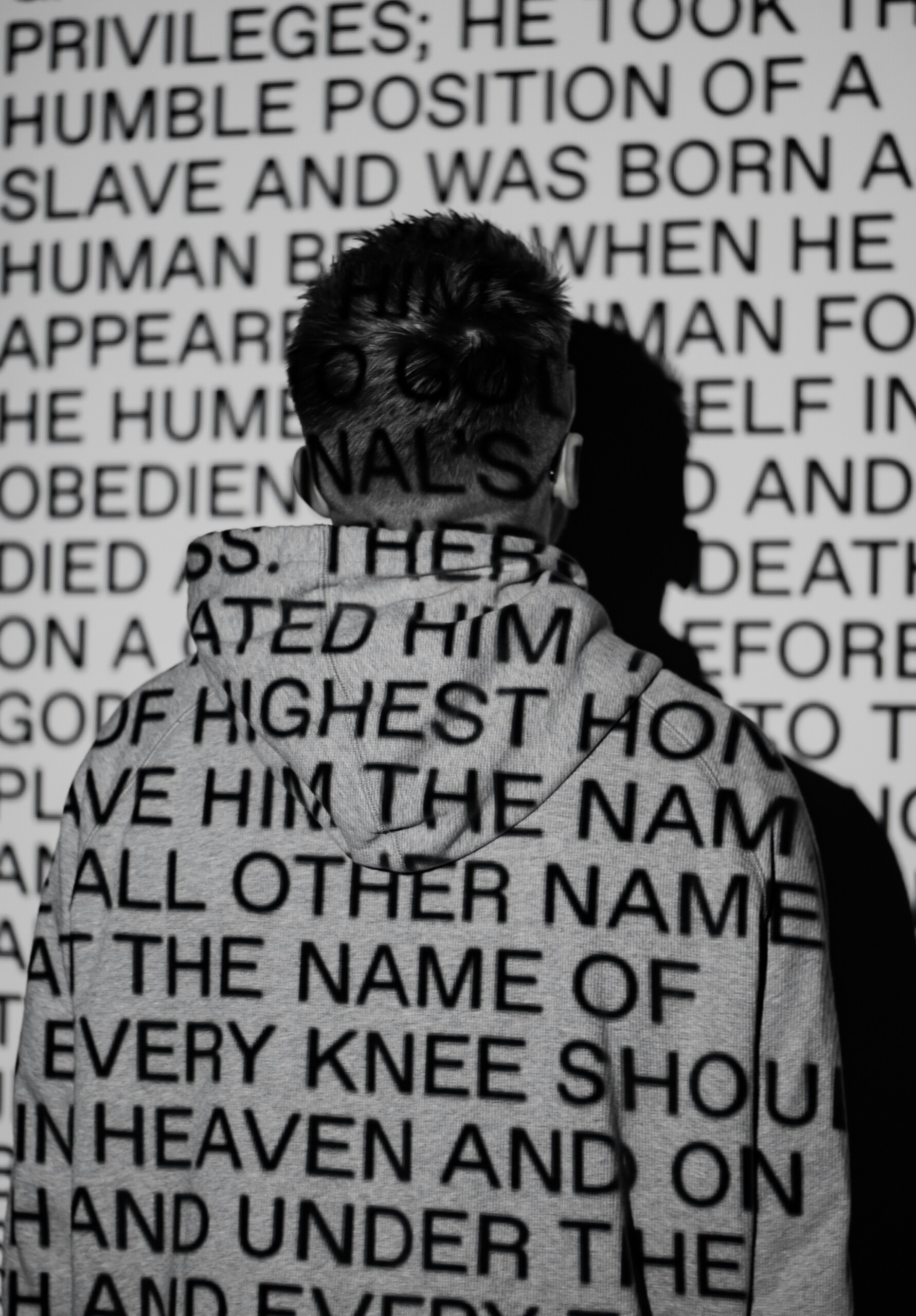

Artificial intelligence (AI) is making its way into nearly every sphere of human life. This is certainly true of higher education, for good and ill, and Christian colleges and universities are no exception. My own alma mater, Olivet Nazarene University, in addition to grappling with some of the most pressing AI-related ethical considerations around student learning, has just launched a new AI program to navigate the urgent tensions inherent in this technology. I hope what follows might provide something of value to administrators and professors at Christian institutions as they consider how to describe the array of emerging AI functions in their midst. Such deliberations will only become more urgent with time, in educational spaces and throughout society. I expect that Christian leaders will find much of what I argue here to be intuitive, given that I am drawing on a Christian anthropology. My main thesis is not profound, but I hope it is profitable as a messaging framework for Christian institutions as they approach internal and external communications on this ever-evolving topic.

As the capabilities of AI have increased, the discourse around it has begun to shift from viewing the technology as merely a powerful tool to describing it as some new sort of agent in the world. Proponents of this view talk about AI in ways that obscure its limits by attributing to it uniquely human capacities. They do so because they misunderstand the created nature of human freedom and reason that can never apply to AI functions. The spiritual, psychological, moral, and social repercussions of this rhetorical error could be devastating over time if left unchecked.

Beyond the dire practical consequences, we must fulfill our obligation to speak truthfully. Language that anthropomorphizes AI is dangerously misleading, not because such rhetoric is prima facie absurd (though in some respects it is absurd) but because of how impressive the technology is even in its infancy, displaying functions that mimic some distinctly human traits in unprecedented ways.

Consider the idea of AI consciousness, for example. “Interesting Times,” a podcast hosted by Ross Douthat of The New York Times, recently released an episode titled, “Anthropic’s Chief on A.I.: ‘We Don’t Know if the Models Are Conscious’.” Despite being in the early stages of the AI revolution, leaders in the field are already leaving the door open to the possibility of AI consciousness, particularly as models progress toward artificial general intelligence (AGI). We can be sure, however, about that which Anthropic’s CEO Dario Amodei and many others seem unsure: AI models, without exception, will never be capable of true consciousness. Any appearance of consciousness that AI models may exhibit is the result of elaborate imitation or simulation at a dizzying scale and speed, and nothing more. As human interaction with AI becomes more widespread, attributing consciousness where none exists could have massive consequences.

In saying that, I do not at all mean to diminish the technological achievement, global significance, or unfathomable potential of AI. I am simply making a definitive pronouncement on the kind of thing AI models can never be: agents with a will capable of exercising freedom and all that freedom entails. On this basis alone, AI models can never seek to understand, express love, exercise devotion to God, or otherwise truly act in the world. In this time of astonishing technological and cultural revolution, the language we use to discuss AI must proceed with thoughtfulness and discipline, antithetical as such an approach may be to our cynical, superficial, and performative age.

Elite Confusion over the Human/AI Divide

This human-created technology will increasingly have the capacity to imitate, simulate, and even exceed a range of human capacities. Distinguishing a human person from an AI simulacrum, therefore, will be essential. Which brings me to my initial inspiration for this essay. I continue to be troubled by the extent to which journalists, industry experts, tech executives, and individual users speak about AI in ways that suggest it possesses genuine human qualities.

In some respects, AI is merely the latest in a long line of technological developments capable of performing human-like functions. The difference now is the perception that AI has advanced so much that it has abilities and traits that in the past belonged to humans alone. In Douthat’s interview with Amodei, for example, we hear that AI models “think,” “consider,” “express occasional discomfort,” have a “duty to be ethical,” and, as I mentioned earlier, may even be “conscious.”

Just a few weeks later, we find that language echoed in Ezra Klein’s interview with Amodei’s colleague, Jack Clark, Athropic’s co-founder and director of policy. While talking about this “new era of AI agents,” Klein notes that “we are moving from chatbots to agents, from systems that talk to you to systems that act for you.” Klein continues:

“[A]s my understanding of the math and reinforcement learning goes, we’re still dealing with some kind of prediction model. On the other hand, when I use them, it doesn’t feel that way to me. It feels like there’s intuition there. It feels like there is a lot of context being brought to bear. To the extent that it’s a prediction model, it doesn’t feel that different from saying ‘I’m a prediction model’” [emphasis mine].

Moments later, Klein explains that he is grasping for the right metaphor for thinking about these new AI “agents.” What I want to underscore, however, is how enticing it clearly is for Klein to resort to anthropomorphizing these AI agents, even if he ultimately holds back from going all the way.

We see this tendency in his description of how it “feels” to interact with this technology. But how many reflexive, unthinking references to the “intuitions” of AI will it take before recognition of that description as only a metaphor fades? I suspect not many. The repetition will have a solidifying effect, especially once a critical mass of AI users (the vast majority of whom will have no understanding whatsoever of how AI really works) experience similar feelings of wonder.

Later in the interview, Clark says that

“the smarter we make these systems, the more they need to think — not just about the action they’re doing in the world but about themselves in reference to the world…To solve really hard tasks, [an AI agent] now needs to think about the consequences of its actions.”

Klein and Clark go on to reflect on the “digital personality dimension” of AI. Clark discusses how, in advance of having a “hard conflict conversation” with a colleague, he will periodically consult Anthropic’s AI model “Claude” for insights on how to approach the situation. He may not believe Claude actually “thinks” in the same way as a human, but he does not hesitate to delegate a task historically reserved for humans (i.e., making prudential judgments about interpersonal interactions) to an AI model with the expectation that it will serve the purpose just as well. My reservations about using Claude in this manner could not be stronger, but the deeper concern here is how Clark’s casual portrayal of his reliance on Claude for navigating human relationships reflects a deeply mistaken estimation of the model’s true nature and capabilities. Claude has no “personality” and certainly cannot be expected to “think” or make relational or moral judgements.

Clark might respond to this criticism by noting that he is merely seeking descriptive metaphors to help listeners understand what functions Claude and other AI models can offer without intending to take a metaphysical position regarding their “true” nature. To which I respond: the stakes of imprecise language in this case are simply too high, and anthropological metaphors for AI fail the moment they’re deployed because the difference between the human person and AI is one of kind, not degree.

The Vital Corrective of Christian Anthropology

In 2024, the Vatican released Antiqua et Nova, a splendid reflection “on the Relationship Between Artificial Intelligence and Human Intelligence” that can be edifying for Catholic and non-Catholic Christians alike. The document sorts AI into “narrow AI” and “Artificial General Intelligence” (AGI). Speaking of the former, the authors observe: “By analyzing large datasets to identify patterns, AI can ‘predict’ outcomes and propose new approaches, mimicking some cognitive processes typical of human problem-solving.” (8) Of the latter, they write:

“While each ‘narrow AI’ application is designed for a specific task, many researchers aspire to develop what is known as [AGI]—a single system capable of operating across all cognitive domains and performing any task within the scope of human intelligence.” (9)

After outlining the state of AI research and understanding among experts, Antiqua et Nova argues that underlying these perspectives “is the implicit assumption that the term ‘intelligence’ can be used in the same way to refer to both human intelligence and AI.” (10)Antiqua et Nova repudiates that assumption entirely. All Christians should do the same.

Here’s the critical point: “AI’s advanced features give it sophisticated abilities to perform tasks, but not the ability to think” (Antiqua et Nova, 12). In other words, the human capacity to “think” is not a mere processing of information in an orderly way toward some application. It also implicates the capacities of intellect (the intuitive grasp of the truth) and reason (the process of inquiring, analyzing, and deliberating that leads to judgment). (Antiqua et Nova, 14) Next, the document’s authors delve into what it means for the human person to be “rational,” not in a reductive way but in a fully integrated sense:

“Describing the human person as a ‘rational’ being…recognizes that the ability for intellectual understanding shapes and permeates all aspects of human activity…In this sense, the term ‘rational’ encompasses…‘knowing and understanding, as well as willing, loving, choosing, and desiring’…This comprehensive perspective underscores how, in the human person, created in the ‘image of God,’ reason is integrated in a way that elevates, shapes, and transforms both the person’s will and actions” (15) [emphasis added].

Language fulfills many purposes, not least of which is enabling us to make meaningful distinctions. Human persons, who have the capacity to act, and AI agents, which have the capacity to function, are not merely different points on a continuous spectrum. They are fundamentally different things. As AI becomes more sophisticated and its presence in our world more ubiquitous, blurring the line between human and AI will become irresistible to many. When AI is paired with coming breakthroughs in robotics technology, the problem is sure to worsen dramatically. It is, therefore, critical during this time of transition to push back against it. We must establish habits of language to reinforce habits of mind to uphold what is true about ourselves in contrast to the tools we produce, no matter how capable those tools become.

Christians should be especially receptive to this argument – and prepared to advance it – though I hope it also finds a sympathetic hearing beyond my own faith community. What does it mean for someone to take conscious action? It is a capacity made possible by the nature of the human person, who consists of desires, intellect, and will (among other traits). God designed our nature for the ultimate purposes of enabling us to receive his love, out of which he created all things, and to love him in return. All other exercises of human freedom are derived from the freedom God has so graciously granted to us to love him.

The uniquely human capacity to act may be observed in a neurologist diligently seeking an effective diagnosis and treatment for a patient’s persistent pain, a mother sacrificing day-after-day in the care of her baby, or a soldier earnestly praying for courage and protection. No AI system will ever truly seek to understand, show sacrificial love, or pursue God. Let us take great care to speak accordingly. Christian higher ed is especially well-positioned to lead the way.

There are several footnotes and references, but no bibliography or footnotes for the reader to follow. Can these be added so that we can dig deeper into those resources?

Sam, the references and page numbers are from documents that are hyperlinked in the text. Sorry for any confusion.

Very nicely argued, although as I write this reply, I notice that this program keeps trying to complete my thoughts (wording) for me. This function has the effect of making me think of wording that I never intended to use, or of thoughts that never occurred to me without a ‘prompt.’

My apprehension about AI comes with the thought that ‘writer assistance programming’ could eventually influence my patterns of thought in directions I don’t intend to take, or at least to misdirect me from drawing conclusions without unconsciously bowing to a covert influence.

I am concerned that students will accept being guided in their thought process by influences that pull their thinking away from what God is trying to tell them about the world through His Word and Holy Spirit, even from universally accepted human virtues! The solution to all of this is to eliminate as much as possible the influence of AI in the development of the thought process in young people. Training students to think without the use of AI (potentially poisoned more and more) is likely the only answer; one must ask, “Who will be left to teach people how to reason unassisted?”

I also wonder whether or not you yourself used AI “resources” to help compose the piece above. I have used AI to get started writing something scholarly a time or two, and when I have, I noticed that while I saved a little time, AI actually limited my scope of thinking about my subject. I noticed that AI could not tell me precisely what I wanted to know, nor could it list all the resources in which I might be interested for the purposes of writing a meaningful piece. If AI remains a supple assistant only, I can see how it might save a scholar time, or help one access/expand the memory-bibliography in one’s head (I am 70). But saving time is not always important to the success of the reasoning processes we use to write meaningfully or even fairly.

Should the Lord tarry, I think we may be in for a very rough ride indeed. We will have to learn how to write Christianly when confronting our ‘Weston,’ that is, until we figure out, like Ransom did, what God had in mind for us to do in response to our opponent’s evil, destructive influence. We must not forget that AI is synthetic; it looks like the real ‘think,’ but it will never pass as the genuine article.

Thanks for inspiring a little ‘free thought’ this morning! May God give you help in your battle!

P.S.: Have you ever noticed that “AI” looks like the first two letters in “Algorithm”?

Dr. John Hugo, DMA, Professor of Music, Liberty University

Dr. Hugo,

Thank you for reading the piece and for sharing some of your careful thinking about the proper application and pitfalls of AI in scholarly work and student learning. You raise critical issues in which prudence is and will remain key. I do not use AI in my research or writing process, and that was the case with this article. I share some of your concerns about how such usage might constrain or undermine my own thinking. I don’t necessarily think I have landed in the right spot in my total abstinence from AI. Likely some analogue to the virtue of chastity is more appropriate with respect to this powerful tool. I just haven’t landed on what that should look like as I read, reflect, and write. Whatever its right application might be, viewing it as having personality or consciousness or the capacity to understand, etc., etc. is extremely dangerous, and Christians must resist giving these false notions any foothold.

With gratitude,

Nathan